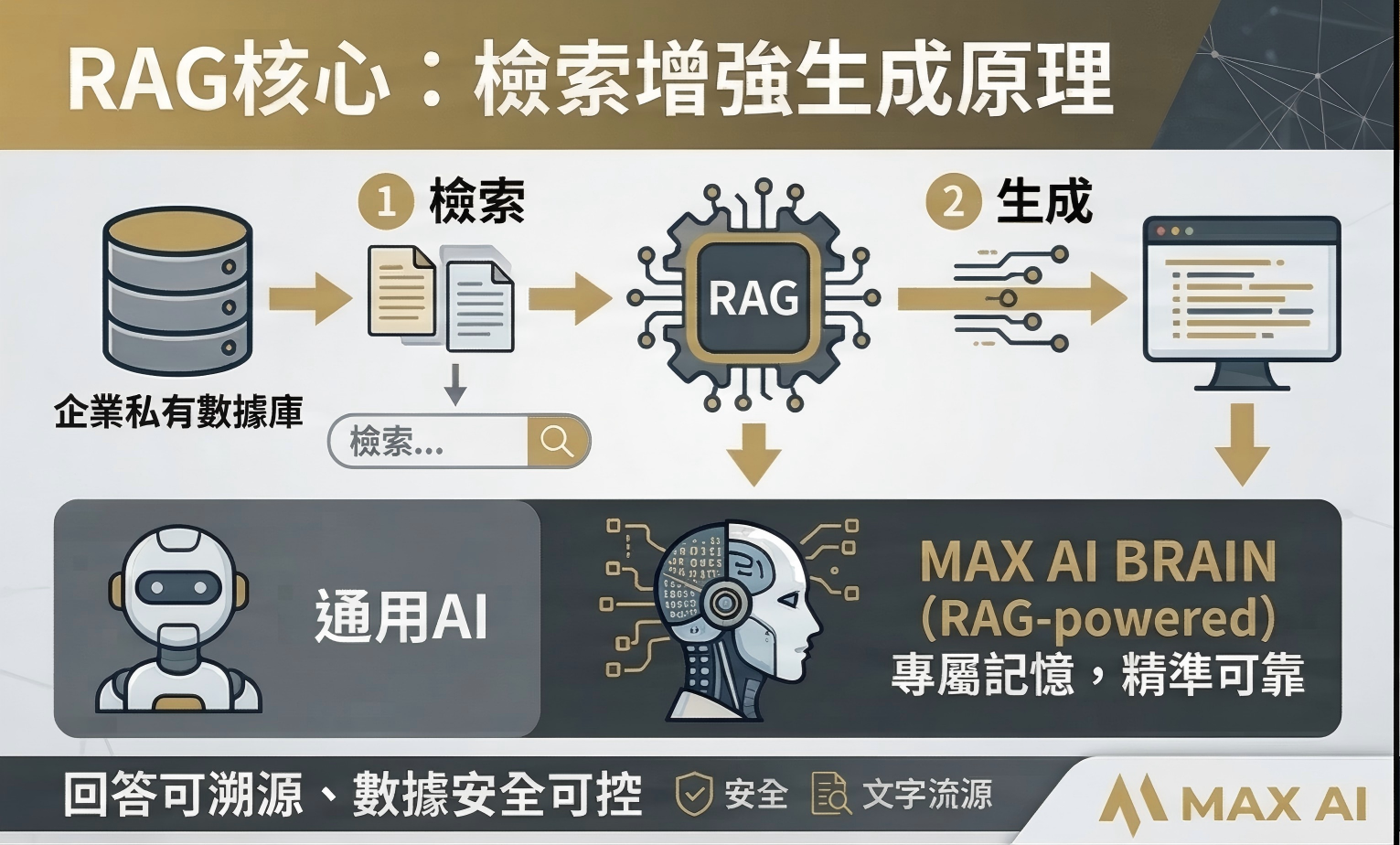

What Is RAG and Why Does It Matter?

Retrieval-Augmented Generation, or RAG, is a technique that combines the power of large language models with the precision of your own company data. Instead of relying solely on a model's pre-trained knowledge, RAG retrieves relevant documents from your internal knowledge base and uses them to generate accurate, context-specific answers.

This matters because generic AI models do not know your company's policies, product details, or internal procedures. RAG bridges that gap, giving the AI a reliable, up-to-date memory of everything your organization knows.

For enterprises dealing with large volumes of documents, contracts, manuals, and policies, RAG transforms how employees access information. Rather than searching through folders or asking colleagues, anyone can ask a question in natural language and receive a precise answer in seconds.

How RAG Works Under the Hood

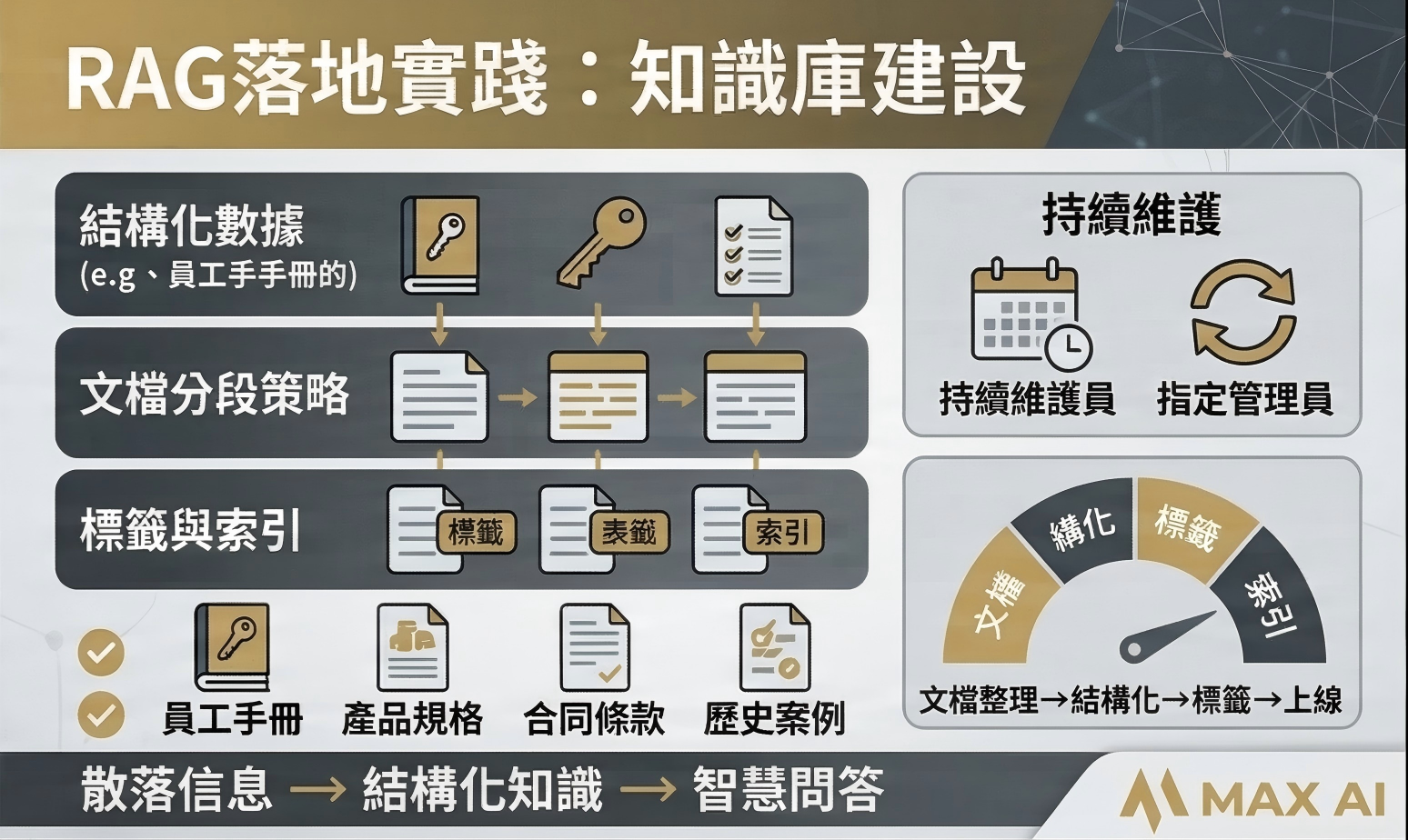

At a high level, a RAG system operates in two stages. First, your documents are processed, split into meaningful chunks, and converted into numerical representations called embeddings. These embeddings are stored in a vector database optimized for fast similarity searches.

When a user asks a question, the system converts the query into an embedding, searches the vector database for the most relevant document chunks, and then feeds those chunks to the language model as context. The model uses this context to generate an answer that is both fluent and factually grounded in your data.

Real-World Applications for SMEs

RAG is not just for large corporations. SMEs can use it to build internal knowledge assistants that help new employees onboard faster, answer customer questions about complex product lines, or ensure that compliance-related queries always reference the latest regulations.

At MAX AI, our BRAIN service leverages RAG technology to build private, secure knowledge bases for businesses across Macau. We handle everything from document ingestion and parsing to deploying a natural language query interface that your team can start using immediately.

The impact is significant: companies that deploy RAG-powered knowledge bases typically see a 70 percent reduction in time spent searching for information, along with improved consistency and accuracy in the answers their teams provide.

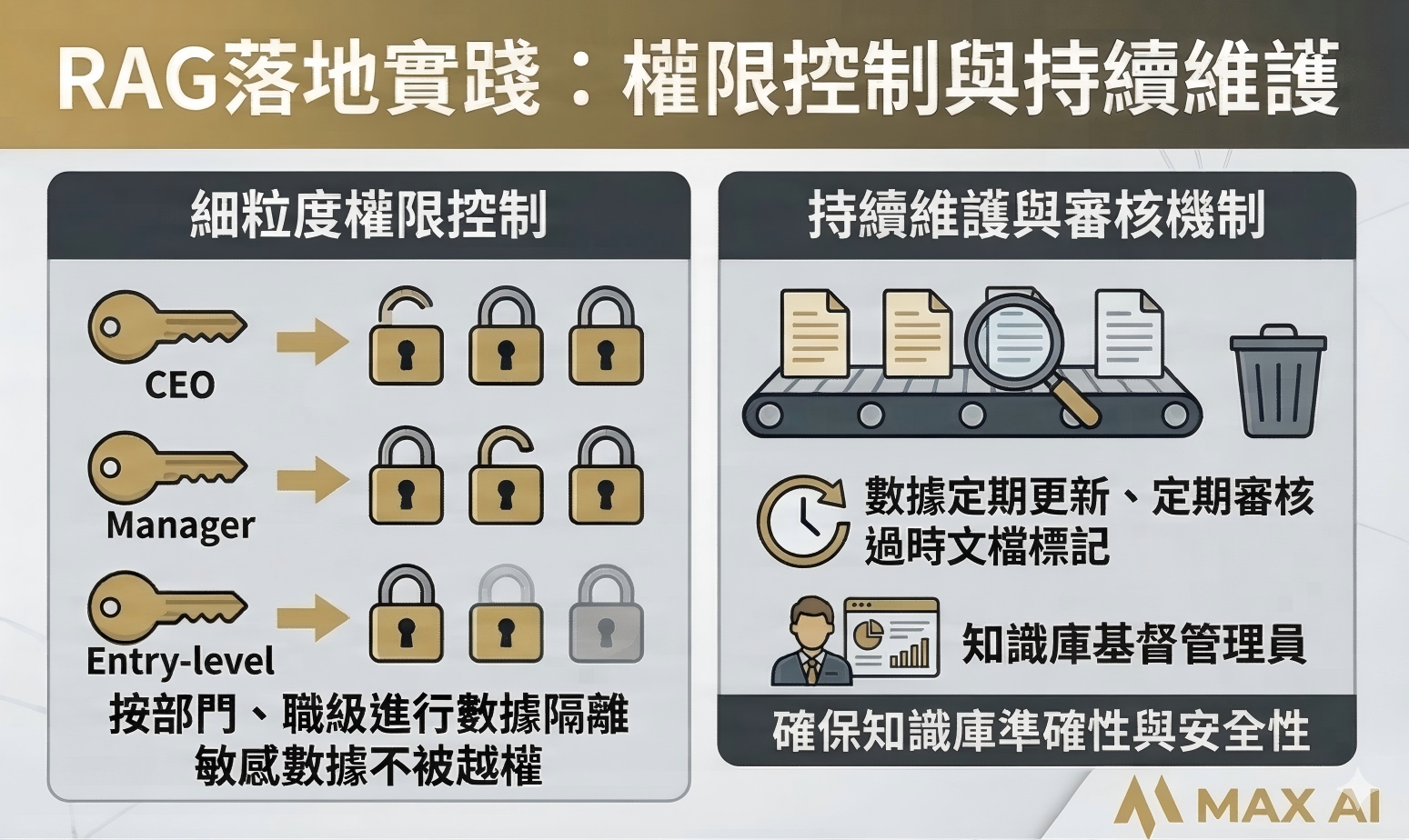

Getting Started with RAG in Your Organization

Implementing RAG begins with gathering and organizing your most critical documents. This includes policy manuals, product catalogs, training materials, and frequently asked questions. The quality and coverage of your source material directly determines the quality of the AI's answers.

From there, a structured implementation process covers document parsing, embedding generation, vector database setup, and interface design. With the right partner, the entire process from kickoff to a working prototype can take as little as two weeks.